What is parallel testing?

Parallel testing is your ticket to faster testing and a quicker turn around in deployments. When testing websites or applications, it is important to remember that time is a factor- you always have a finite amount of time to test before deployments or to increase your coverage. Testing 100% of an application is a noble cause, but no developer wants to spend more time testing than developing their product. Parallel testing lets you get more testing done in a tighter window.

With Continuous Integration, testers and developers are asked to be constantly writing new test scripts for different features and test cases. These scripts take time to run, and the more test cases running against an increasing number of environments can spell doom for a deployment. Think that testing is only going to take 2 days? Wrong, your tests have been firing off for a week, and they still have a day left. So how do we speed up testing and do more QA’ing with less time between deployments? The answer is Parallel Testing.

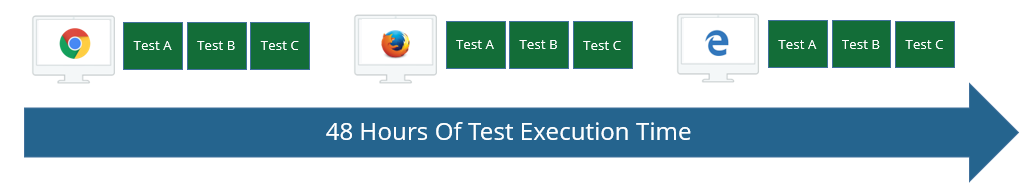

Sequential test execution

Click the image to enlarge it.

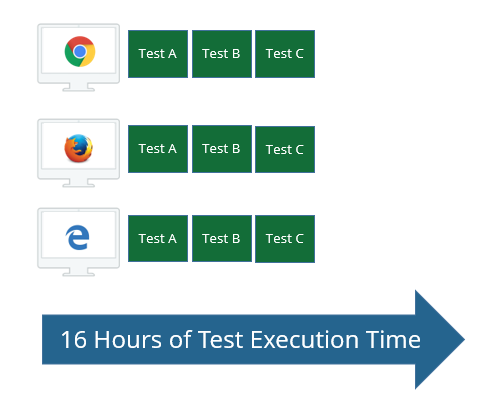

Parallel test execution

Instead of running tests sequentially, or one after the other, parallel testing allows us to execute multiple tests at the same point in time across different environments or part of the code base. You can do this by setting up multiple VMs and other device infrastructure or by using a cloud test service like CrossBrowserTesting.

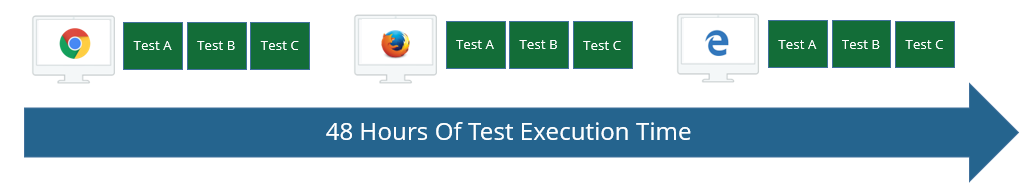

Parallel environments

The growing number of devices and browsers your customers are using can be a challenge when trying to test quickly and efficiently. Let us imagine a real world example: in release 3 of your product, you have 8 hours of sequential regression testing to perform before the team feels confident to deploy.

By release 5, this may be twice the amount of hours you will need to run your tests and as a bonus, your product is getting popular and is being used by more users on an increasing number of different devices. Before you were testing for only Chrome and FireFox, but now you see that you need Android and iOS devices, Safari and multiple versions of Internet Explorer. So you have 16 hours of tests and 10 different devices or browser to cover. This would take us 160 hours for complete test coverage before our deployment. With parallel testing environments, we can run our 16 hours of tests on 10 different devices at the same time, saving us 146 hours of testing time.

Parallel execution also has the distinct advantage of isolating test cases and runs to one specific OS or browser, allowing for the testers and developers to dedicate their meaningful resources of serious problems with cross-platform compatibility.

Parallel testing in CrossBrowserTesting

The maximum number of automated Selenium and JavaScript unit tests (API initiated tests) that can be run concurrently is limited according to your subscription plan.

We understand the importance of running as many tests concurrently as possible to increase the velocity of your continuous integration flow. To support this, we do not place arbitrarily low constraints on the number of parallel tests.

A major advantage of our service is that we provide a combination of real operating systems and an extensive array of physical phones and tablets. While we do maintain a large number of mobile devices, they are not unlimited. Ensuring the load is spread across the configurations is important in providing availability for all our customers.

If you try running more parallel tests than your billing plan supports, then additional run requests will be queued. The maximum queue length is equal to the maximum number of parallel tests your plan allows. For example, if your plan supports 5 concurrent tests, then 5 more tests can be in the queue. Additional test requests are denied.

Examples

Node.js

Node.js

Node.js

Node.js Node.js

Node.jsThe following example demonstrates running multiple browsers in parallel for one Selenium script using Node.js and WebDriverJS.

JavaScript

// Google Search - Selenium Example Script

//See https://github.com/SeleniumHQ/selenium/wiki/WebDriverJs for detailed instructions

var username = '[email protected]'; //replace with your email address

var authkey = '12345'; //replace with your authkey

var webdriver = require('selenium-webdriver'),

SeleniumServer = require('selenium-webdriver/remote').SeleniumServer,

request = require('request');

var remoteHub = "http://" + username + ":" + authkey + "@hub.crossbrowsertesting.com:80/wd/hub";

//add multiple browsers to run in parallel here

var browsers = [

{ browserName: 'firefox', os_api_name: 'Win7', browser_api_name: 'FF27', screen_resolution: '1024x768' },

{ browserName: 'chrome', os_api_name: 'Mac10.9', browser_api_name: 'Chrome40x64', screen_resolution: '1024x768' },

{ browserName: 'internet explorer', os_api_name: 'Win8.1', browser_api_name: 'IE11', screen_resolution: '1024x768' }

];

var flows = browsers.map(function(browser) {

return webdriver.promise.createFlow(function() {

var caps = {

name : 'Google Search - Selenium Test Example',

build : '1.0',

browserName : browser.browserName, // <---- this needs to be the browser type in lower case: firefox, internet explorer, chrome, opera, or safari

browser_api_name : browser.browser_api_name,

os_api_name : browser.os_api_name,

screen_resolution : browser.screen_resolution,

record_video : "true",

record_network : "false", // set to "true" to record network traffic

record_snapshot : "false",

username : username,

password : authkey

};

var driver = new webdriver.Builder()

.usingServer(remoteHub)

.withCapabilities(caps)

.build();

//need sessionId before any api calls

driver.getSession().then(function(session){

var sessionId = session.id_;

driver.get('http://www.google.com');

var element = driver.findElement(webdriver.By.name('q'));

element.sendKeys('cross browser testing');

element.submit();

driver.call(takeSnapshot, null, sessionId);

driver.getTitle().then(function(title) {

if (title !== ('cross browser testing - Google Search')) {

throw Error('Unexpected title: ' + title);

}

});

driver.quit();

driver.call(setScore, null, 'pass', sessionId);

});

});

});

webdriver.promise.fullyResolved(flows).then(function() {

console.log('All tests passed!');

});

webdriver.promise.controlFlow().on('uncaughtException', function(err){

console.error('There was an unhandled exception! ' + err);

});

//Call API to set the score

function setScore(score, sessionId) {

//webdriver has built-in promise to use

var deferred = webdriver.promise.defer();

var result = { error: false, message: null }

if (sessionId){

request({

method: 'PUT',

uri: 'https://crossbrowsertesting.com/api/v3/selenium/' + sessionId,

body: {'action': 'set_score', 'score': score },

json: true

},

function(error, response, body) {

if (error) {

result.error = true;

result.message = error;

}

else if (response.statusCode !== 200){

result.error = true;

result.message = body;

}

else{

result.error = false;

result.message = 'success';

}

deferred.fulfill(result);

})

.auth(username, authkey);

}

else{

result.error = true;

result.message = 'Session Id was not defined';

deferred.fulfill(result);

}

return deferred.promise;

}

//Call API to get a snapshot

function takeSnapshot(sessionId) {

//webdriver has built-in promise to use

var deferred = webdriver.promise.defer();

var result = { error: false, message: null }

if (sessionId){

request.post(

'https://crossbrowsertesting.com/api/v3/selenium/' + sessionId + '/snapshots',

function(error, response, body) {

if (error) {

result.error = true;

result.message = error;

}

else if (response.statusCode !== 200){

result.error = true;

result.message = body;

}

else{

result.error = false;

result.message = 'success';

}

//console.log('fulfilling promise in takeSnapshot')

deferred.fulfill(result);

}

)

.auth(username,authkey);

}

else{

result.error = true;

result.message = 'Session Id was not defined';

deferred.fulfill(result); //never call reject as we don't need this to actually stop the test

}

return deferred.promise;

}

Python

Python

Python

Python Python

PythonThere are two different ways to run tests in parallel:

Multi-threaded

Python

from threading

import Thread

from selenium

import webdriver

import time

USERNAME =

"USERNAME"

API_KEY =

"API_KEY"def get_browser(caps):

return webdriver.Remote(

desired_capabilities=caps,

command_executor=

"http://%s:%[email protected]:80/wd/hub" % (USERNAME, API_KEY)

)

browsers = [

{

"platform":

"Windows 7 64-bit",

"browserName":

"Internet Explorer",

"version":

"10",

"name":

"Python Parallel"},

{

"platform":

"Windows 8.1",

"browserName":

"Chrome",

"version":

"50",

"name":

"Python Parallel"},

]

browsers_waiting = []

def get_browser_and_wait(browser_data):

print (

"starting %s\n" % browser_data[

"browserName"])

browser = get_browser(browser_data)

browser.get(

"http://crossbrowsertesting.com")

browsers_waiting.append({

"data": browser_data,

"driver": browser})

print (

"%s ready" % browser_data[

"browserName"])

while len(browsers_waiting) < len(browsers):

print (

"working on %s.... please wait" % browser_data[

"browserName"])

browser.get(

"http://crossbrowsertesting.com")

time.sleep(3)

threads = []

for i, browser

in enumerate(browsers):

thread = Thread(target=get_browser_and_wait, args=[browser])

threads.append(thread)

thread.start()

for thread

in threads:

thread.join()

print (

"all browsers ready")

for i, b

in enumerate(browsers_waiting):

print (

"browser %s's title: %s

" % (b["data

"]["name

"], b["driver

"].title)) b[

"driver"].quit()

Nose

To launch Selenium scripts in parallel using Nose:

nosetests --processes=\ --where=tests

Where tests is a name of folder that stores tests and tests have the following structure:

test_nose.py

#!/usr/bin/env pythonimport unittest

from selenium

import webdriver

USERNAME =

"mikeh"

API_KEY =

""class SeleniumCBT(unittest.TestCase):

def setUp(self):

caps = {}

caps[

'name'] =

'Python Nose Parallel' caps[

'build'] =

'1.0' caps[

'browser_api_name'] =

'Chrome43x64' caps[

'os_api_name'] =

'Win8.1' caps[

'screen_resolution'] =

'1024x768' # start the remote browser on our server self.driver = webdriver.Remote(

desired_capabilities=caps,

command_executor=

"http://%s:%[email protected]:80/wd/hub" % (USERNAME, API_KEY)

)

self.driver.implicitly_wait(20)

def test_CBT(self):

# load the page URL print(

'Loading Url')

self.driver.get(

'http://crossbrowsertesting.github.io/selenium_example_page.html')

# check the title print(

'Checking title')

self.assertTrue(

"Selenium Test Example Page" in self.driver.title)

def tearDown(self):

print(

"Done with session %s" % self.driver.session_id)

self.driver.quit()

if __name__ ==

'__main__':

unittest.main()

test_nose2.py

#!/usr/bin/env pythonimport unittest

from selenium

import webdriver

USERNAME =

"mikeh"

API_KEY =

""class SeleniumCBT(unittest.TestCase):

def setUp(self):

caps = {}

caps[

'name'] =

'Python Nose Parallel' caps[

'build'] =

'1.0' caps[

'browser_api_name'] =

'IE10' caps[

'os_api_name'] =

'Win7x64-C2' caps[

'screen_resolution'] =

'1024x768' # start the remote browser on our server self.driver = webdriver.Remote(

desired_capabilities=caps,

command_executor=

"http://%s:%[email protected]:80/wd/hub" % (USERNAME, API_KEY)

)

self.driver.implicitly_wait(20)

def test_CBT(self):

# load the page URL print(

'Loading Url')

self.driver.get(

'http://crossbrowsertesting.github.io/selenium_example_page.html')

# check the title print(

'Checking title')

self.assertTrue(

"Selenium Test Example Page" in self.driver.title)

def tearDown(self):

print(

"Done with session %s" % self.driver.session_id)

self.driver.quit()

if __name__ ==

'__main__':

unittest.main()

Java

Java

Java

Java Java

JavaThere are two different ways to run tests in parallel:

- Using JUnit and

Parallelized class that multithreads the JUnit Tests.

- Using TestNG and load an xml-file with different configurations.

JUnit

The following example demonstrates running multiple tests in parallel using JUnit and Parallelized class:

Parallelized.java

package cbt.selenium.junit;

import java.util.concurrent.ExecutorService;

import java.util.concurrent.Executors;

import java.util.concurrent.TimeUnit;

import org.junit.runners.Parameterized;

import org.junit.runners.model.RunnerScheduler;

public class Parallelized extends Parameterized {

private static class ThreadPoolScheduler implements RunnerScheduler {

private ExecutorService executor;

public ThreadPoolScheduler() {

String threads = System.getProperty("junit.parallel.threads", "16");

int numThreads = Integer.parseInt(threads);

executor = Executors.newFixedThreadPool(numThreads);

}

@Override

public void finished() {

executor.shutdown();

try {

executor.awaitTermination(10, TimeUnit.MINUTES);

} catch (InterruptedException exc) {

throw new RuntimeException(exc);

}

}

@Override

public void schedule(Runnable childStatement) {

executor.submit(childStatement);

}

}

public Parallelized(Class<?> klass) throws Throwable {

super(klass);

setScheduler(new ThreadPoolScheduler());

}

}

JUnitParallel.java

package cbt.selenium.junit;

/*

* Run as a junit test

*/

import java.io.File;

import java.io.IOException;

import java.net.URL;

import java.util.LinkedList;

import org.apache.commons.io.FileUtils;

import org.junit.After;

import org.junit.Before;

import org.junit.Test;

import org.junit.runner.RunWith;

import org.junit.runners.Parameterized;

import org.openqa.selenium.By;

import org.openqa.selenium.OutputType;

import org.openqa.selenium.TakesScreenshot;

import org.openqa.selenium.WebDriver;

import org.openqa.selenium.WebElement;

import org.openqa.selenium.remote.Augmenter;

import org.openqa.selenium.remote.DesiredCapabilities;

import org.openqa.selenium.remote.RemoteWebDriver;

@RunWith(Parallelized.class)

public class JUnitParallel {

private String username = "mikeh";

private String api_key = "";

private String os;

private String browser;

@Parameterized.Parameters

public static LinkedList<String[]> getEnvironments() throws Exception {

LinkedList<String[]> env = new LinkedList<String[]>();

//define OS's and browsers

env.add(new String[]{"Win10", "chrome-latest"});

env.add(new String[]{"Win10", "ff-latest"});

//add more browsers here

return env;

}

public JUnitParallel(String os_api_name, String browser_api_name) {

this.os = os_api_name;

this.browser = browser_api_name;

}

private WebDriver driver;

@Before

public void setUp() throws Exception {

DesiredCapabilities capability = new DesiredCapabilities();

capability.setCapability("os_api_name", os);

capability.setCapability("browser_api_name", browser);

capability.setCapability("name", "JUnit-Parallel");

driver = new RemoteWebDriver(

new URL("http://" + username + ":"+ api_key + "@hub.crossbrowsertesting.com:80/wd/hub"),

capability

);

}

@Test

public void testSimple() throws Exception {

driver.get("http://www.google.com");

String title = driver.getTitle();

System.out.println("Page title is: " + title);

WebElement element = driver.findElement(By.name("q"));

element.sendKeys("CrossBrowserTesting.com");

element.submit();

driver = new Augmenter().augment(driver);

File srcFile = ((TakesScreenshot) driver).getScreenshotAs(OutputType.FILE);

try {

FileUtils.copyFile(srcFile, new File("Screenshot.png"));

} catch (IOException e) {

e.printStackTrace();

}

}

@After

public void tearDown() throws Exception {

driver.quit();

}

}

TestNG

The following example demonstrates running multiple tests in parallel using TestNG:

testng.xml

<?xml version="1.0" encoding="UTF-8"?>

<!DOCTYPE suite SYSTEM "http://testng.org/testng-1.0.dtd">

<suite thread-count="2" name="Suite" parallel="tests">

<test name="FirstTest">

<parameter name="os" value="Win10"/>

<parameter name="browser" value="chrome-latest"/>

<classes>

<class name="cbt.selenium.testng.TestCase"/>

</classes>

</test> <!-- Test -->

<test name="SecondTest">

<parameter name="os" value="Win10"/>

<parameter name="browser" value="ff-latest"/>

<classes>

<class name="cbt.selenium.testng.TestCase"/>

</classes>

</test> <!-- Test -->

</suite> <!-- Suite -->

TestNGSample.java

package cbt.selenium.testng;

/*

* Run from the xml suit file

*/

import org.testng.annotations.Test;

import org.testng.annotations.AfterClass;

import org.testng.annotations.BeforeClass;

import org.testng.Assert;

import java.io.File;

import java.io.IOException;

import java.net.URL;

import org.apache.commons.io.FileUtils;

import org.openqa.selenium.By;

import org.openqa.selenium.OutputType;

import org.openqa.selenium.TakesScreenshot;

import org.openqa.selenium.WebDriver;

import org.openqa.selenium.WebElement;

import org.openqa.selenium.remote.Augmenter;

import org.openqa.selenium.remote.DesiredCapabilities;

import org.openqa.selenium.remote.RemoteWebDriver;

public class TestNGSample {

private String username = "mikeh";

private String api_key = "";

private WebDriver driver;

@BeforeClass

@org.testng.annotations.Parameters(value={"os", "browser"})

public void setUp(String os,String browser) throws Exception {

DesiredCapabilities capability = new DesiredCapabilities();

capability.setCapability("os_api_name", os);

capability.setCapability("browser_api_name", browser);

capability.setCapability("name", "TestNG-Parallel");

driver = new RemoteWebDriver(

new URL("http://" + username + ":" + api_key + "@hub.crossbrowsertesting.com:80/wd/hub"),

capability);

}

@Test

public void testSimple() throws Exception {

driver.get("http://www.google.com");

System.out.println("Page title is: " + driver.getTitle());

Assert.assertEquals("Google", driver.getTitle());

WebElement element = driver.findElement(By.name("q"));

element.sendKeys("CrossBrowserTesting.com");

element.submit();

}

@AfterClass

public void tearDown() throws Exception {

driver.quit();

}

}

Ruby

Ruby

Ruby

Ruby Ruby

RubyThe following example demonstrates running multiple tests in parallel using Ruby:

Where

Gemfile

source 'https://rubygems.org'

gem 'selenium'

gem 'selenium-webdriver'

gem 'test-unit'

gem 'parallel_tests'

Gemfile.lock

GEM

remote: https://rubygems.org/

specs:

childprocess (0.5.6)

ffi (~> 1.0, >= 1.0.11)

ffi (1.11.1)

jar_wrapper (0.1.8)

zip

multi_json (1.11.2)

parallel (1.6.1)

parallel_tests (1.6.0)

parallel

power_assert (0.2.4)

rubyzip (1.2.3)

selenium (0.2.11)

jar_wrapper

selenium-webdriver (2.47.1)

childprocess (~> 0.5)

multi_json (~> 1.0)

rubyzip (~> 1.0)

websocket (~> 1.0)

test-unit (3.1.3)

power_assert

websocket (1.2.2)

zip (2.0.2)

PLATFORMS

ruby

DEPENDENCIES

parallel_tests

selenium

selenium-webdriver

test-unit

BUNDLED WITH

1.10.6

unittest/test1.rb

require 'selenium-webdriver'

require 'test/unit'

class SampleTest1 < Test::Unit::TestCase

def setup

username=''

authkey=''

url = "http://#{username}:#{authkey}@hub.crossbrowsertesting.com:80/wd/hub"

caps = Selenium::WebDriver::Remote::Capabilities.new

caps["name"] = "Ruby Parallel"

caps["browserName"] = "Internet Explorer"

caps["platform"] = "Windows 7"

caps["screen_resolution"] = "1024x768"

@driver = Selenium::WebDriver.for(:remote,

:url => url,

:desired_capabilities => caps)

end

def test_post

@driver.navigate.to "http://www.google.com"

element = @driver.find_element(:name, 'q')

element.send_keys "CrossBrowserTesting.com"

element.submit

end

def teardown

@driver.quit

end

end

unittest/test2.rb

require 'selenium-webdriver'

require 'test/unit'

class SampleTest2 < Test::Unit::TestCase

def setup

username='mikeh'

key=''

url = "http://#{username}:#{key}@hub.crossbrowsertesting.com:80/wd/hub"

caps = Selenium::WebDriver::Remote::Capabilities.new

caps["name"] = "Ruby Parallel"

caps["browserName"] = "Chrome"

caps["os_api_name"] = "Win8.1"

caps["version"] = "43x64"

caps["screen_resolution"] = "1024x768"

@driver = Selenium::WebDriver.for(:remote,

:url => url,

:desired_capabilities => caps)

end

def test_post

@driver.navigate.to "http://www.google.com"

element = @driver.find_element(:name, 'q')

element.send_keys "CrossBrowserTesting.com"

element.submit

end

def teardown

@driver.quit

end

end

Maximum paralell instances

Sometimes when larger accounts are running multiple tests across different teams, availability can get confusing.

If you use APIs, the response you get will tell you about the number of active automated, manual, or headless tests for both team and a single member:

"team": {

"automated": 0,

"manual": 0,

"headless": 0

},

"member": {

"automated": 0,

"manual": 0,

"headless": 0

}

}

You can also receive the response concerning the maximum parallel tests you can carry.

{

"automated": 5,

"manual": 5

}

Active test count returns the number of tests being currently run, while max limits will return your teams upper limits for manual and automated tests.

Image placeholder

Here is an example of using it with our pre-existing parallel python example:

**note the number of threads created is how many your parallels your team currently has available.

from Queue import Queue

from threading import Thread

from selenium import webdriver

import time , requests

USERNAME = "CBT_USERNAME"

API_KEY = "CBT_AUTHKEY"

q = Queue(maxsize=0)

browsers = [

{"os_api_name": "Win7x64-C2", "browser_api_name": "IE10", "name": "Python Parallel"},

{"os_api_name": "Win8.1", "browser_api_name": "Chrome43x64", "name": "Python Parallel"},

{"os_api_name": "Mac10.14", "browser_api_name" : "Chrome73x64", "name": "Python Parallel"}

]

# put all of the browsers into the queue before pooling workers

for browser in browsers:

q.put(browser)

api_session = requests.Session()

api_session.auth = (USERNAME,API_KEY)

active_tests = api_session.get("https://crossbrowsertesting.com/api/v3/account/activeTestCounts").json()['team']['automated']

max_tests = api_session.get("https://crossbrowsertesting.com/api/v3/account/maxParallelLimits").json()['automated']

print("Active selenium tests happening on overall account: " + str(active_tests) + " \nMaximum sel tests allowed on account: " + str(max_tests))

num_threads = max_tests-active_tests

def test_runner(q):

while q.empty() is False:

try:

browser = q.get()

print("%s: Starting" % browser["browser_api_name"])

driver = webdriver.Remote(desired_capabilities=browser, command_executor="http://%s:%[email protected]:80/wd/hub" % (USERNAME, API_KEY) )

print("%s: Getting page" % browser["browser_api_name"])

driver.get("http://crossbrowsertesting.com")

print("%s: Quitting browser and ending test" % browser["browser_api_name"])

except:

print("%s: Error" % browser["browser_api_name"])

finally:

driver.quit()

time.sleep(15)

q.task_done()

for i in range(num_threads):

worker = Thread(target=test_runner, args=(q,))

worker.setDaemon(True)

worker.start()

q.join()

Another option would be to resend the request for availability every 20-30 seconds at different random timings to help preserve atomicity.

Remember that we have an automatic queueing that will sit for up to 6 minutes as well!

See Also

Selenium

How to run headless tests on CrossBrowserTesting

Node.js

Node.js